Data Import

The Data Import framework lets you load large volumes of data directly into the Capillary Data Platform. It is designed for data teams that need to migrate historical data into Capillary. Data loaded through this framework is written directly to the Capillary database and does not trigger loyalty programs, making it the right choice when you need to load data without affecting live loyalty processes.

What you can import

The framework is organised around profiles. Each profile corresponds to a specific type of data and defines the fields, validations, and rules that apply when loading that data into the platform.

The framework currently supports the Customer profile, which lets you load all customer-related data in a single import job. Support for additional profiles covering transactions, cards, and inventory will be available in subsequent releases.

| Profile | What can be loaded |

|---|---|

| Customer | Standard fields (name, identifiers, registration date), custom fields, extended fields, loyalty type, loyalty slab, and slab expiry, customer status |

| Transactions | (coming soon) Standard fields, custom fields, extended fields, line-item fields |

| Cards | (coming soon) Standard fields, custom fields, extended fields |

| Inventory | (coming soon) Standard fields, attributes, category, hierarchy |

Supported data sources

Once you know the profile and the data you want to import, you connect it to a source. The framework supports the following data sources:

- Databricks: Connect directly to a table in your organisation's Databricks workspace. This is the recommended source for large-scale migrations.

Note

When you select Databricks as your data source, the system connects to your organisation's

import_<orgid>database, a dedicated namespace in your Databricks workspace that holds all import-ready tables for your organisation, where<orgid>is your organisation's unique ID in Capillary. For example, if your organisation ID is1234, your Databricks database name will beimport_1234

Import methods

After selecting a profile and a source, you choose how records should be written to the platform. Three methods are available:

| Method | Description |

|---|---|

| Insert | Creates new records only. If a matching record already exists, it is skipped. |

| Update | Updates existing records only. If no matching record is found, no action is taken. |

| Upsert | Updates a record if it already exists; creates a new one if it does not. |

The mandatory fields required for the import vary depending on the method you select. For example, when using the Insert method for the Customer profile, the mandatory fields are the primary customer identifier, registration date, loyalty type, and the store or till code associated with the registration.

Note

You can run multiple import jobs simultaneously. There is no restriction on the number of import jobs that can run at the same time.

Prerequisite

Before creating an import job, ensure the following:

- The source table is available in Databricks under the

import_<org_id>database. Newly created tables may take up to 10 minutes to appear in the selection list. - The appropriate profile and import method for the data have been identified.

- All mandatory fields required for the selected profile and import method are present in the source table.

- Access to Databricks and the Graviton Import Cluster is enabled. This access allows visibility of the

import_<org_id>database. For access raise a ticket to the Capillary Access mangement team (ACM).

Creating an Import Job

To import data into the Capillary Database, you go through a four-step process:

Select the data source and the table you want to import.

Choose the import profile and how records should be written to the platform.

Map the fields from your source data to the corresponding profile fields in the platform.

Review the full configuration summary and trigger the import job for validation before the data is written to the platform.

Once you proceed, the system runs a validation pass on your data before writing anything to the platform. You can track validation progress in real time on the Job status page. After validation completes, the job moves to an approval stage. Only after you approve is the data written to the Capillary database.

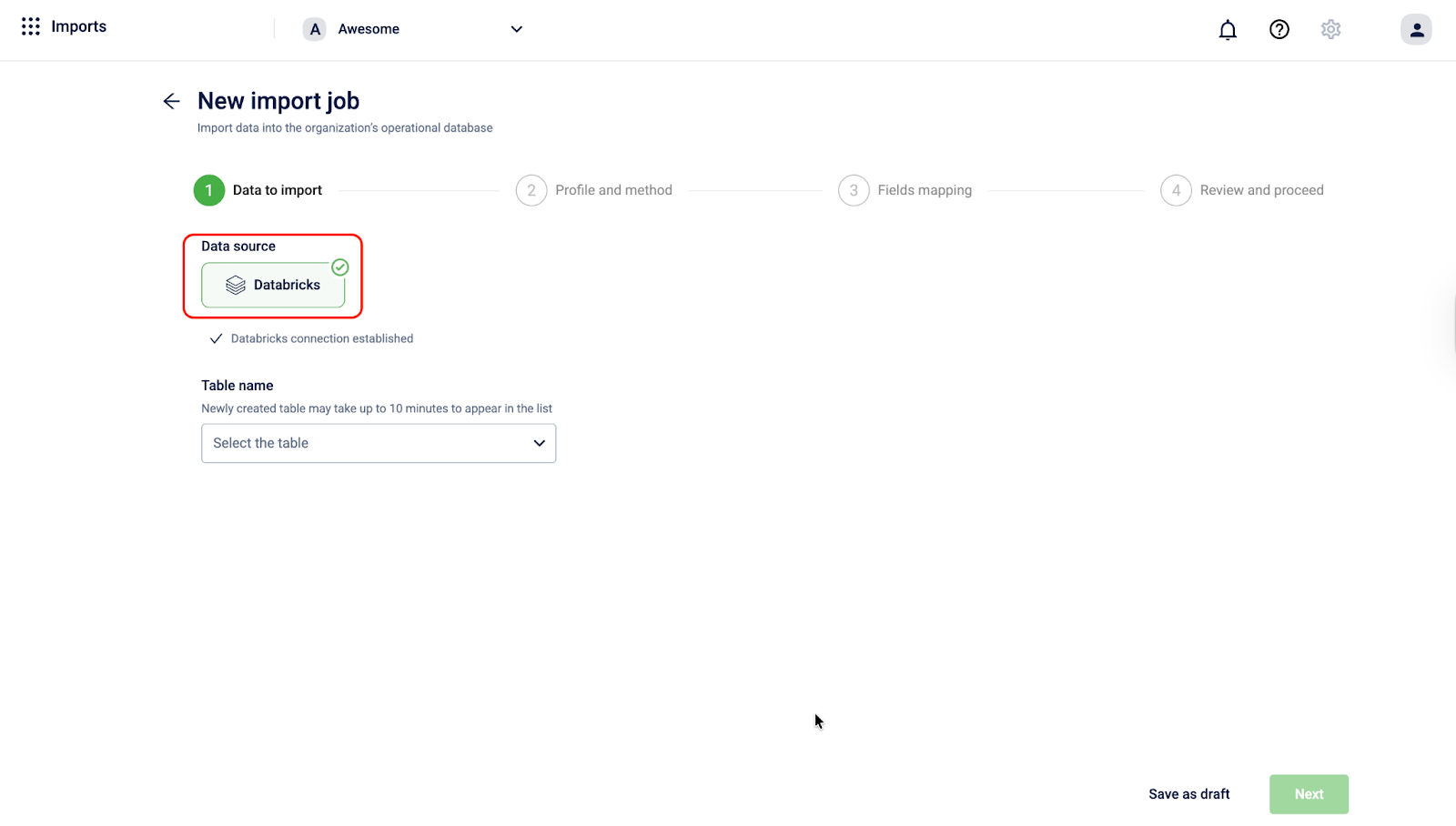

Step 1: Data to import

Select the data source and the table you want to import from your Databricks workspace.

-

On the imports listing page, click + New import job.

-

Under Data source, click Databricks. This connects the import job to your organisation's Databricks workspace as the source of the data. Once selected, the screen confirms: Databricks connection established.

-

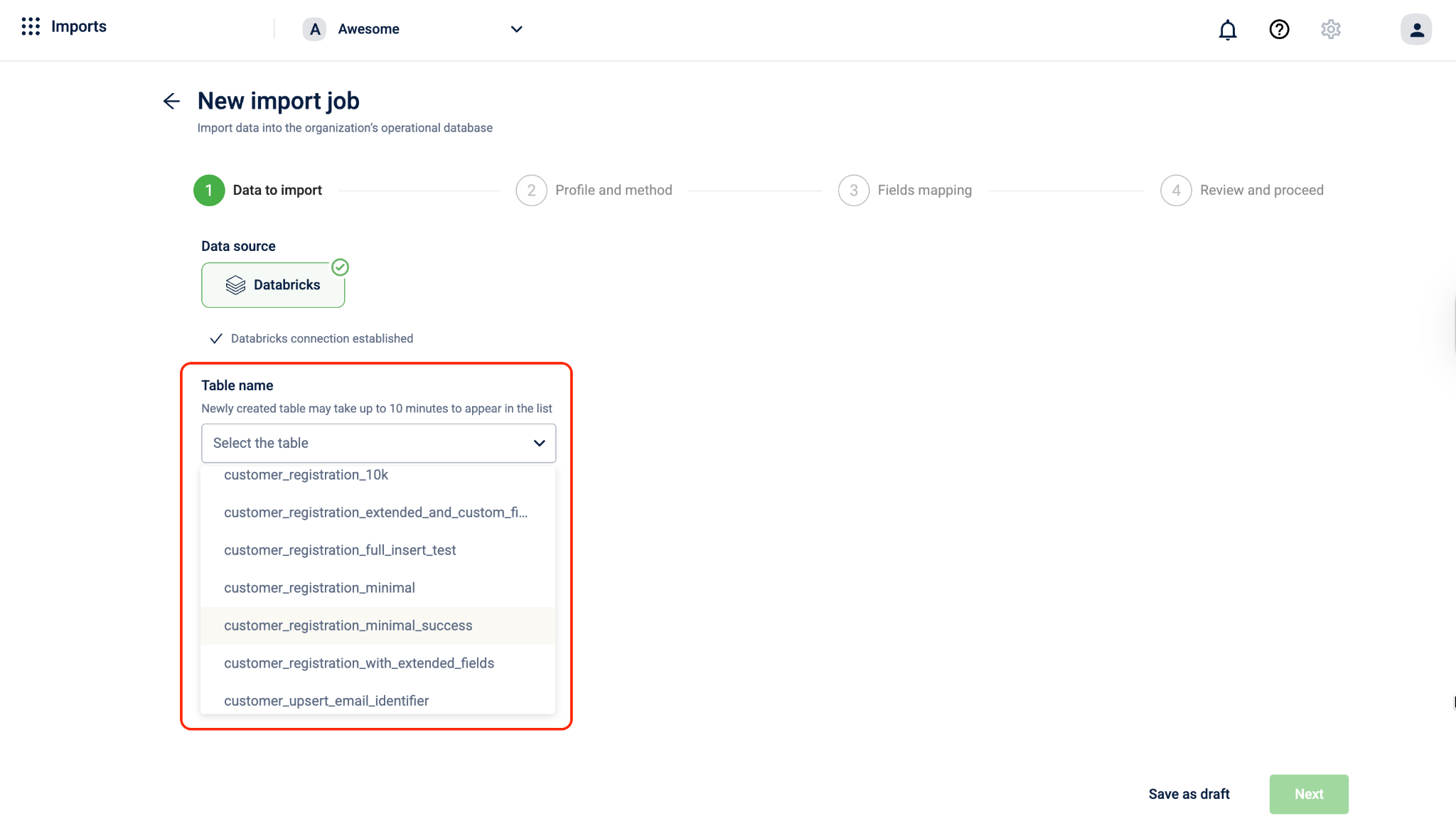

Under Table name, click the Select the table dropdown. The list displays tables that are already created in Databricks under the

import_<orgid>database, a dedicated namespace in Databricks that holds all import-ready tables for your organisation.Note: Newly created tables may take up to 10 minutes to appear in the list.

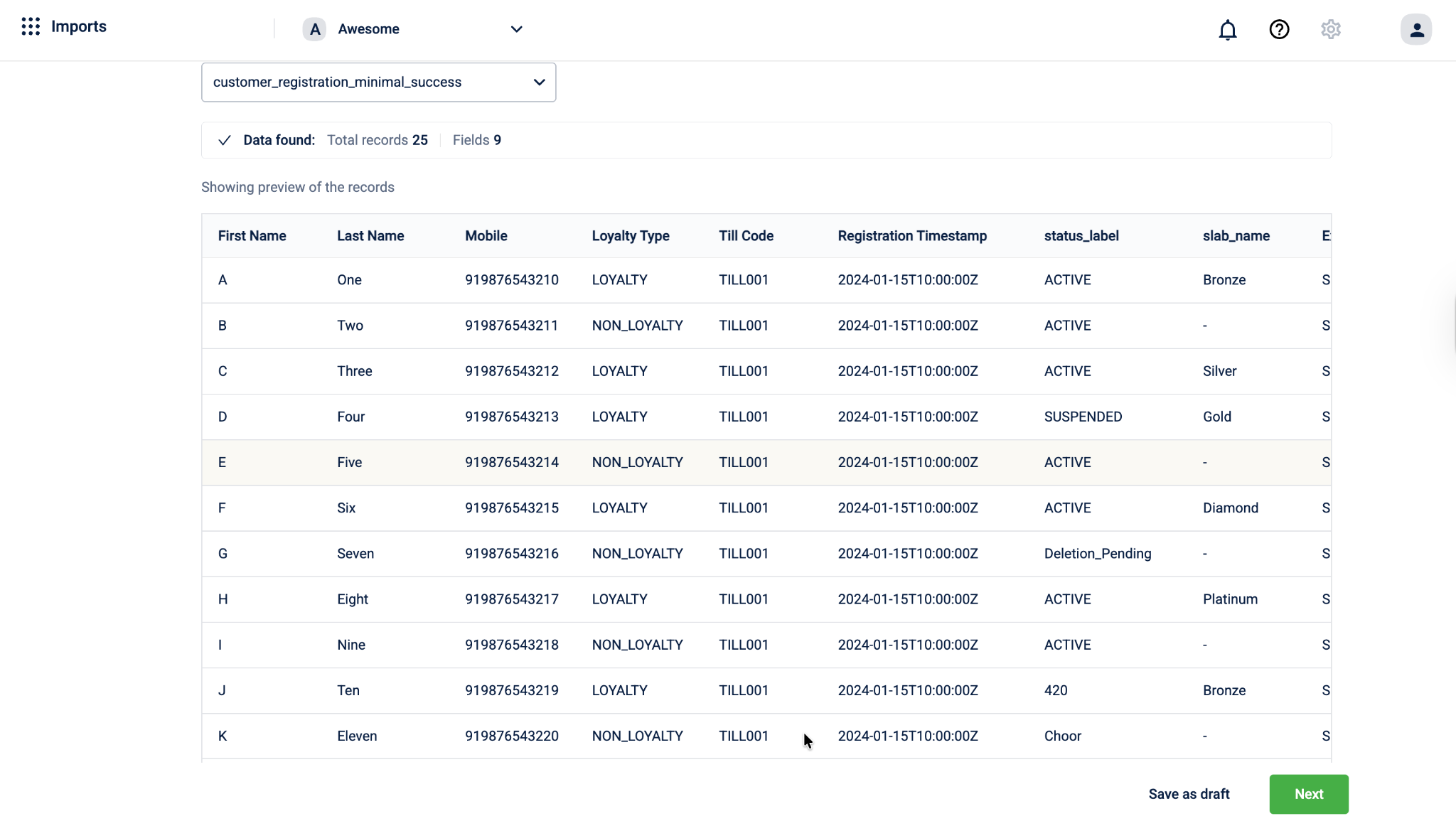

- After selecting a table, the system automatically displays a preview of the data so you can verify the contents before proceeding.

Example: Selecting customer_registration_10k shows:

-

Total records: 10,000

-

Fields: 18

-

A preview of the top 50 records

-

Once you have reviewed the preview, do one of the following:

- Select Save as draft to save the current configuration and continue later. A modal appears prompting you to enter a Job name and an optional Description. Click Save to store the draft. The job appears on the imports listing page, where you can return to edit and complete it later.

- Click Next to proceed to Step 2: Profile and method.

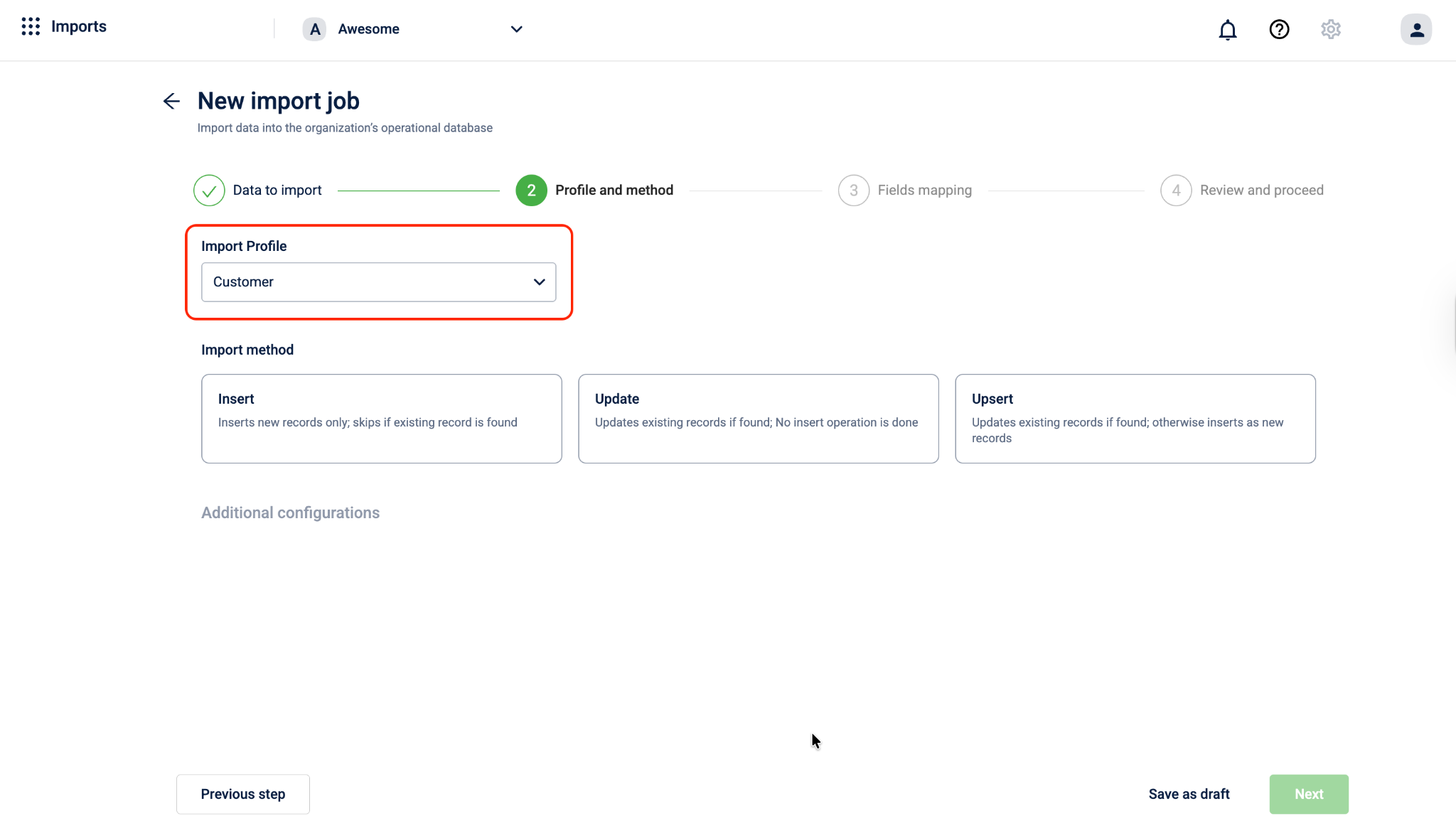

Step 2: Profile and method

Define the import profile that matches your data type and select how the records should be written to the Capillary database.

-

Under Import profile, click the dropdown and select the profile that matches the type of data you are importing. The profile tells the system what entity the data belongs to and how it should be validated and processed.

The following profiles are available:

- Customer: For importing customer registration or profile data.

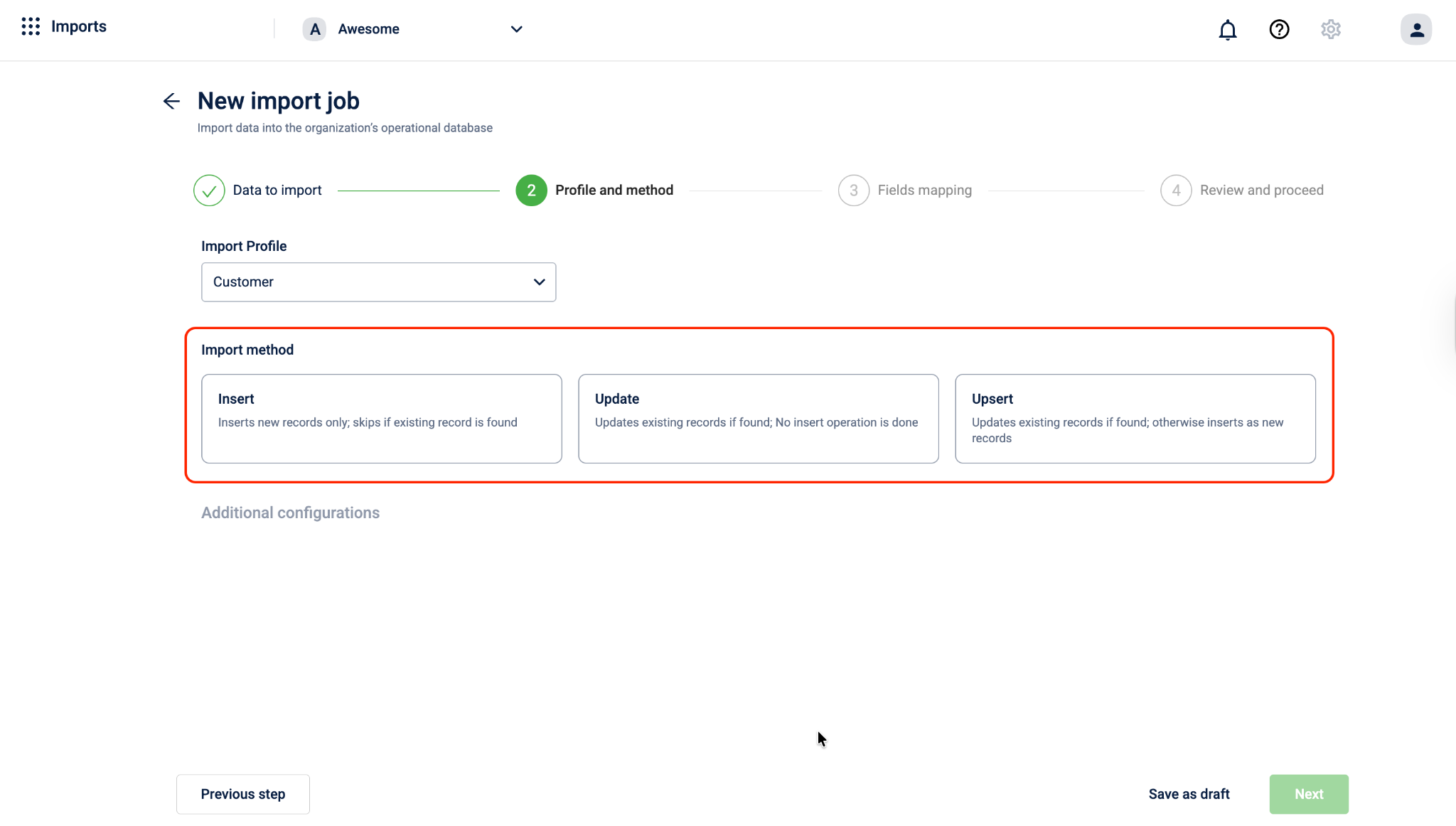

- Under Import method, select how the records from your table should be written to the Capillary database. Three methods are available:

| Method | Description |

|---|---|

| Insert | Inserts new records only; skips if an existing record is found |

| Update | Updates existing records if found; does not insert new records |

| Upsert | Updates existing records if found; otherwise inserts as new records |

Note: The Upsert method is supported even when CONF_ALLOW_REGISTRATION_FROM_ANY_IDENTIFIERS is enabled for the organization.

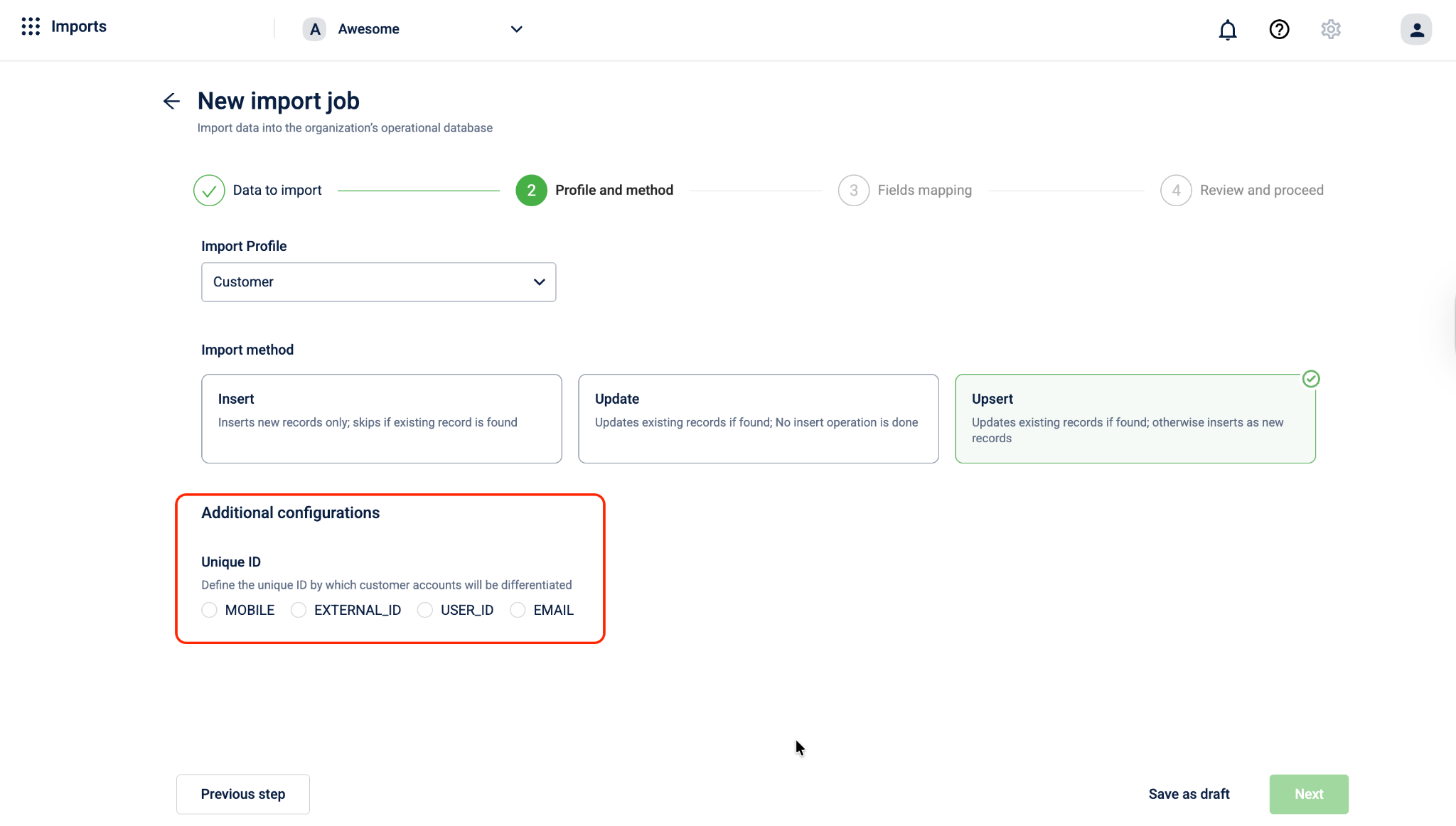

-

Under Additional configurations, set the Unique ID. This defines the identifier the system uses to distinguish between customer accounts during the import.

Select one of the following:

-

MOBILE

-

EXTERNAL_ID

-

USER_ID

-

EMAIL

-

-

Once you have configured the profile, method, and Unique ID, do one of the following:

- Select Previous step to go back to Step 1: Data to import.

- Select Save as draft to save the current configuration and continue later. A modal appears prompting you to enter a Job name and an optional Description. Click Save to store the draft. The job appears on the imports listing page, where you can return to edit and complete it later.

- Select Next to proceed to Step 3: Fields mapping.

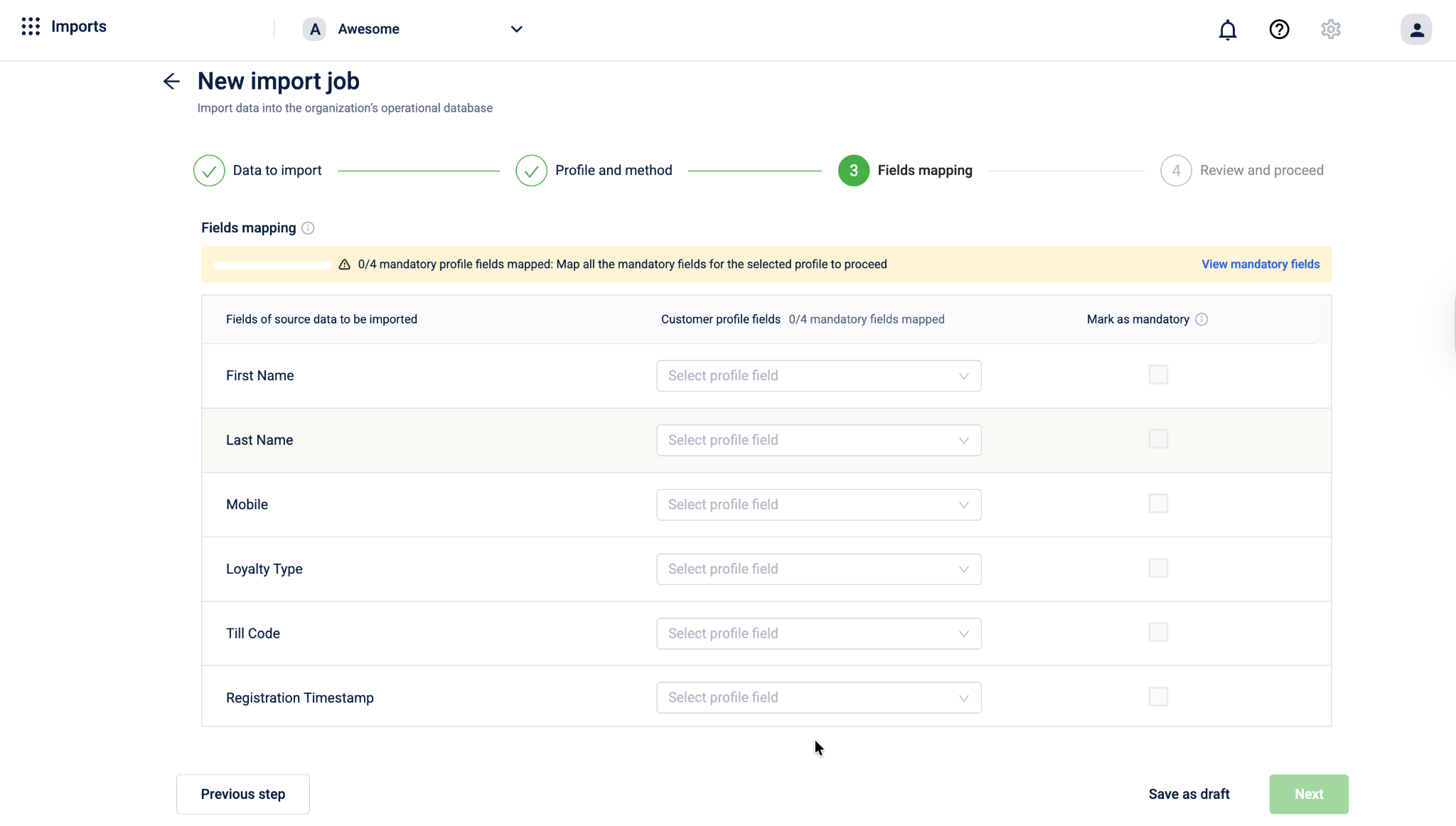

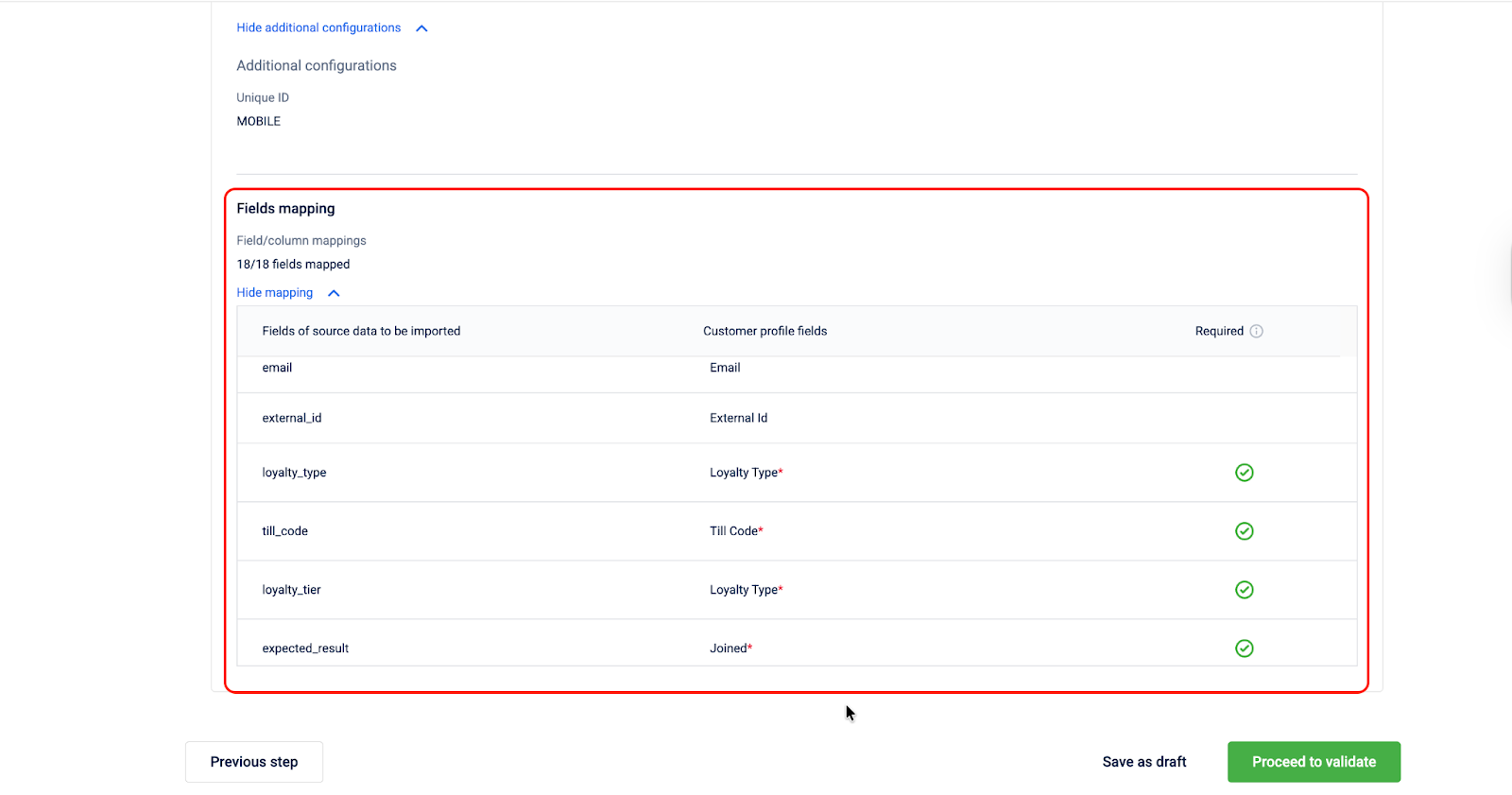

Step 3: Fields mapping

Map the fields from your source data to the corresponding customer profile fields to ensure accurate data import.

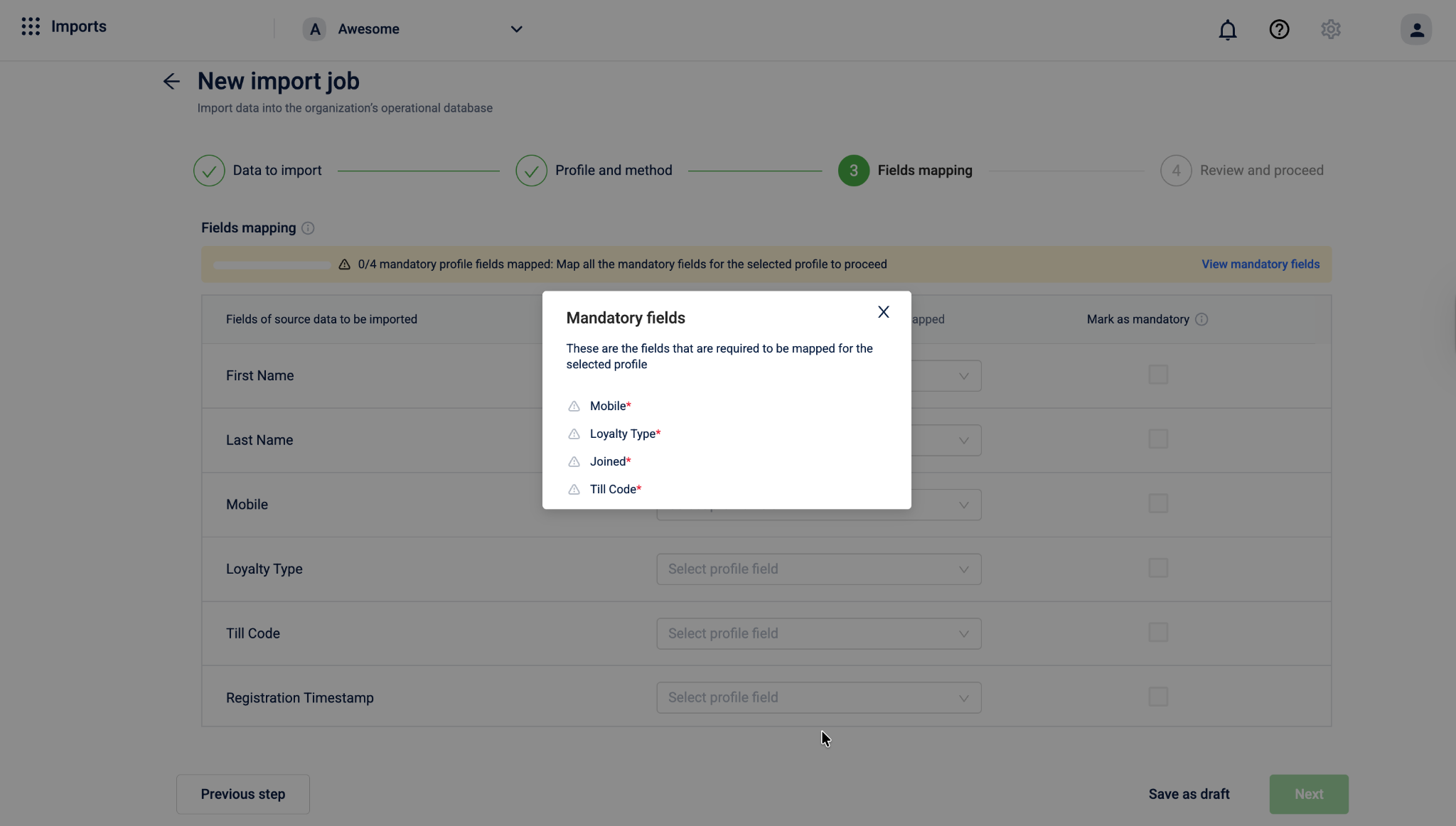

- In the Fields mapping section, review the list of source fields displayed under Fields of source data to be imported. The banner at the top shows how many mandatory profile fields still need to be mapped, for example: 0/4 mandatory profile fields mapped.

- Click View mandatory fields to see which fields are required for the selected profile. The modal lists all mandatory fields marked with

*.

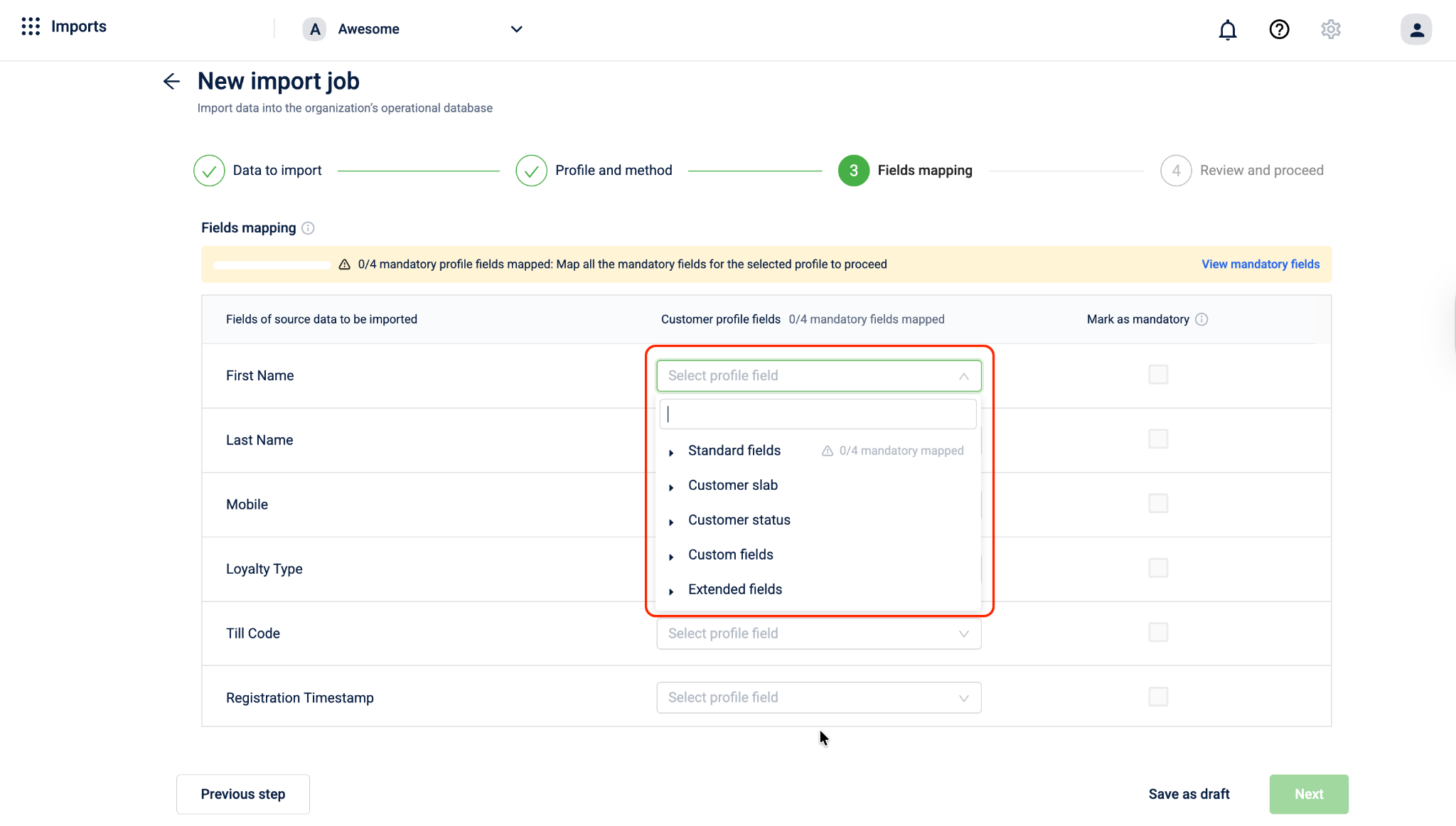

- For each source field, click Select profile field under Customer profile fields and select the appropriate field. Fields are organised into the following categories:

Note: When you map a field that is mandatory for the selected profile, the Mark as mandatory checkbox is automatically selected.

-

Track your mapping progress using the banner at the top, for example: 3/4 mandatory profile fields mapped. The Customer profile fields column header also shows a running count.

-

Optionally, select the Mark as mandatory checkbox next to any additional field to enforce it as mandatory for this import. Fields marked as mandatory must have valid values; otherwise, the record is marked as invalid during import.

Note - If you selected the Update import method, identifier fields such as mobile, email, and external ID cannot be updated. Mapping these fields has no effect. Only non-identifier fields are updated.

-

Once mapping is complete, do one of the following:

- Select Next to proceed to Step 4: Review and proceed.

- Select Save as draft to save the current configuration and continue later. A modal appears prompting you to enter a Job name and an optional Description. Click Save to store the draft. The job appears on the imports listing page, where you can return to edit and complete it later.

- Click Previous step to go back to Step 2: Profile and method.

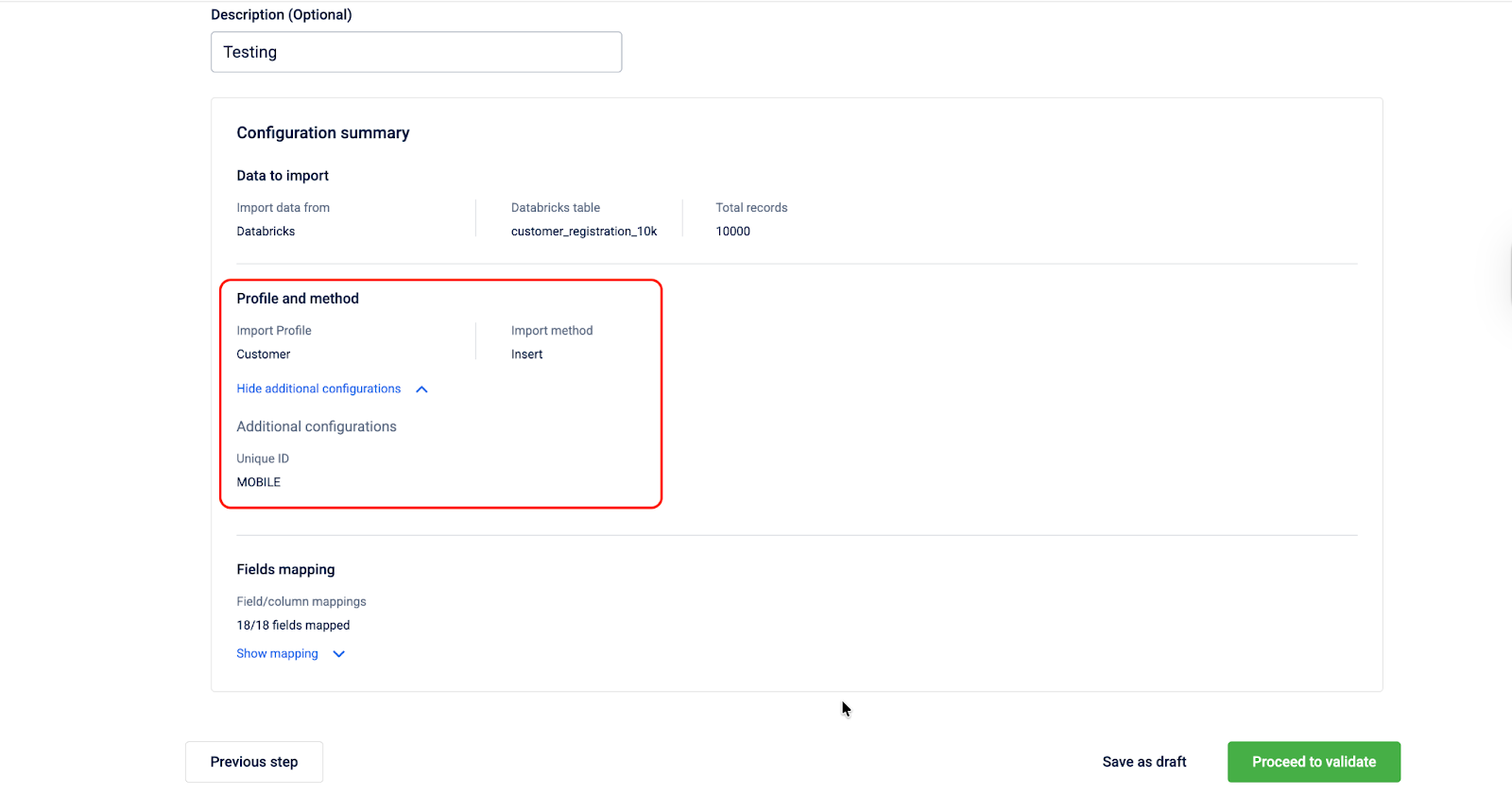

Step 4: Review and proceed

Review the complete configuration of your import job and submit it for validation.

To review and proceed, follow the steps given below.

-

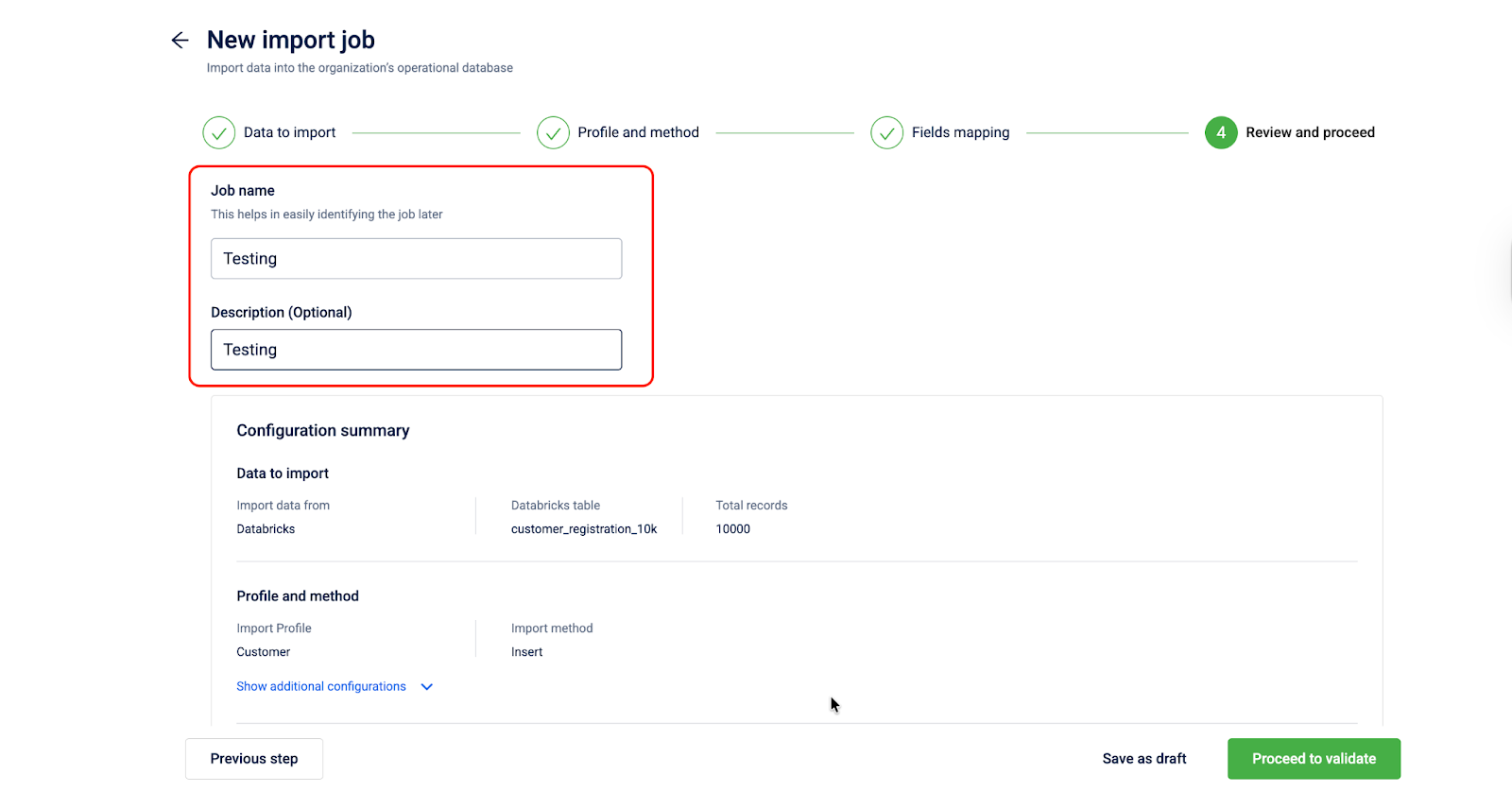

Enter a Job name to help identify the job later.

-

Optionally, enter a Description for this job.

-

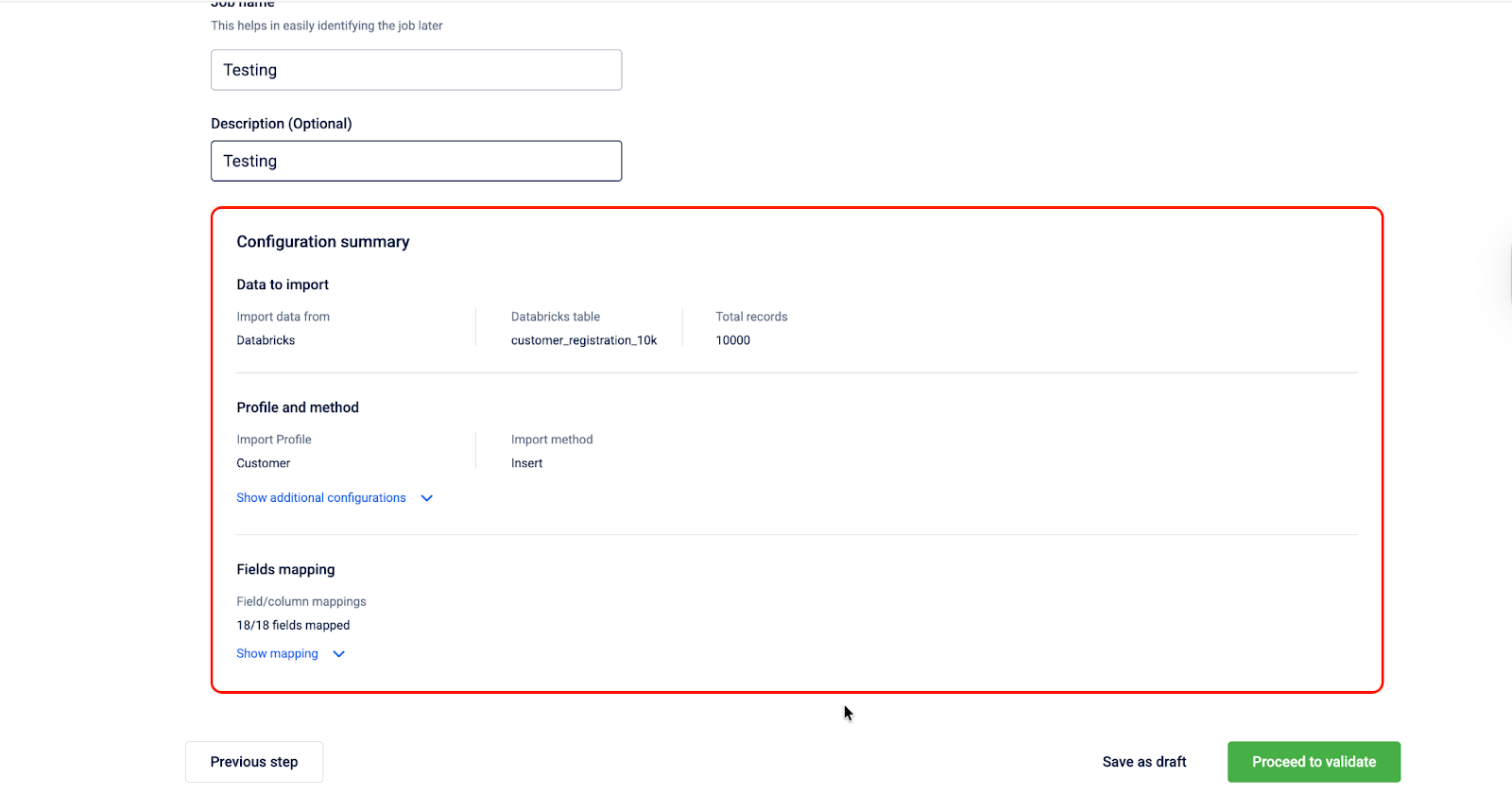

Review the Configuration summary. This section provides an overview of all settings configured across the previous steps. Verify each section before proceeding.

-

Navigate to the Profile and method section and click Show additional configurations to expand and view the additional settings configured in Step 2. Click Hide additional configurations to collapse the section.

-

Navigate to the Fields mapping section and click Show mapping to expand and view the complete field mapping. Fields marked with

*and a green checkmark under Required indicate mandatory fields that have been mapped. Select Hide mapping to collapse the mapping table.

-

Once you have reviewed the configuration, do one of the following:

-

Select Previous step to go back to Step 3: Fields mapping.

-

Select Save as draft to save the current configuration and continue later. A modal appears prompting you to enter a Job name and an optional Description. Click Save to store the draft. The job appears on the imports listing page, where you can return to edit and complete it later.

-

Select Proceed to validate to submit the import job for validation. A confirmation modal appears. Click Yes, validate to confirm.

-

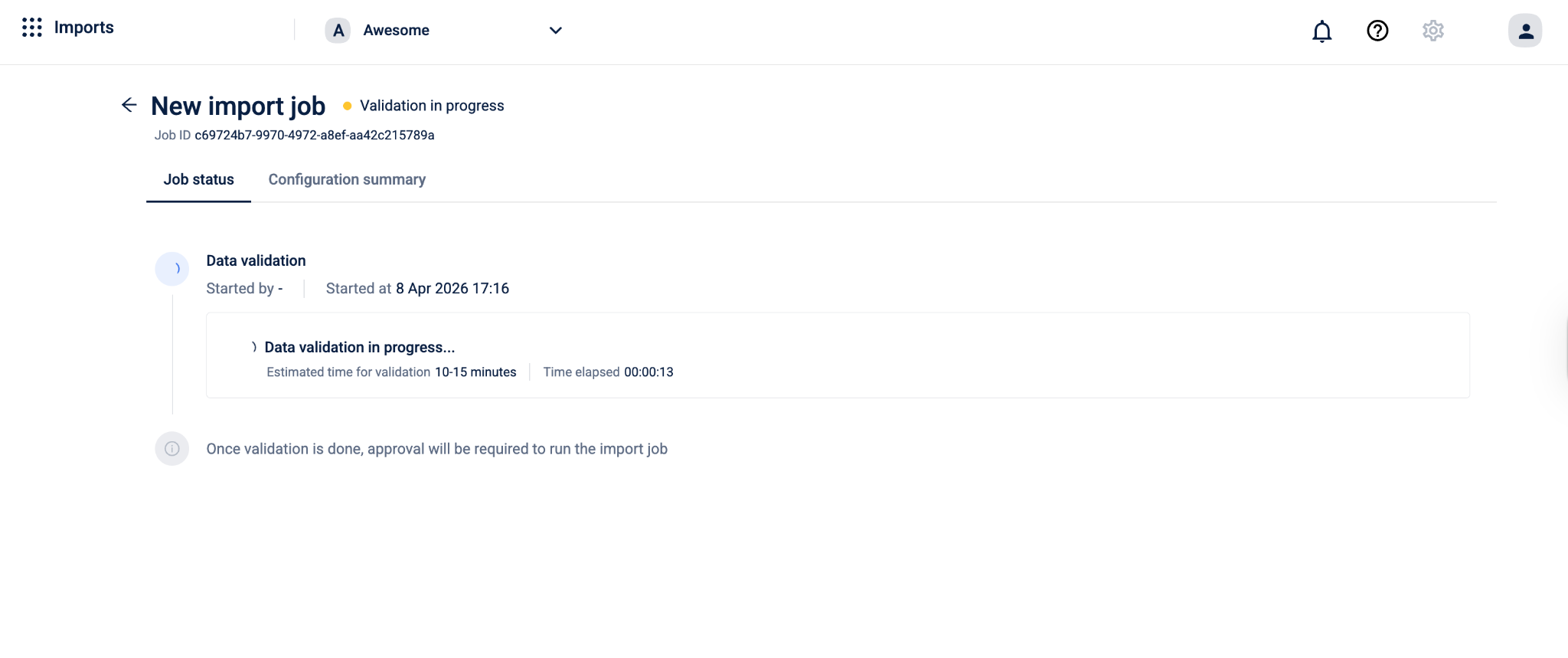

View Data Import Status

The Job status page lets you view the current status of your import job and track validation progress in real time.

Job details

The following details are displayed at the top of the page:

| Field | Description |

|---|---|

| Job name | The name entered for the import job during configuration. |

| Status | The current state of the job. Displays Validation in progress while validation is running. |

| Job ID | A unique system-generated identifier for the import job. |

| Description | The description entered during configuration, if provided. |

Tabs

The page has two tabs:

- Job status: Shows the current progress of the validation job.

- Configuration summary: Shows the complete configuration of the import job as set up across the four steps.

Job status tab

The Job status tab displays the following during validation:

Data validation

- Started by: The name of the user who triggered the validation.

- Started at: The date and time when validation began.

Data validation in progress...

- Estimated time for validation: The approximate time the validation is expected to take, for example, 10–15 minutes.

- Time elapsed: The time that has passed since validation started, displayed in

HH:MM:SSformat.

What Happens During Validation

The system validates every record in your source table against the rules defined for the profile and method you selected. Validation runs field by field and record by record. Records that pass all checks are marked as valid. Records that fail one or more checks are marked as invalid and are not written to the database.

You do not need to take any action during this stage. The system processes the data automatically.

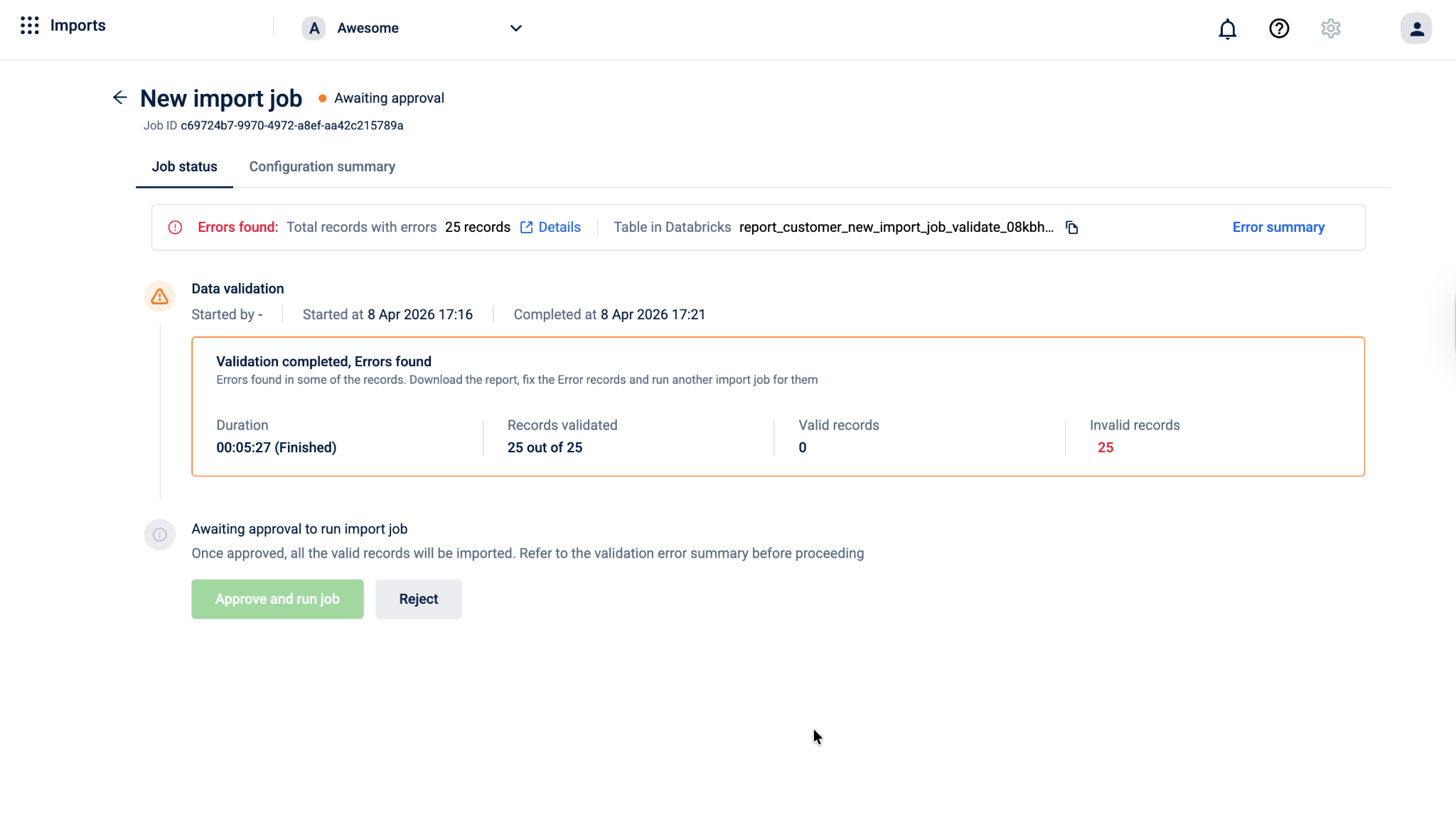

Once validation is complete, the job status page shows a summary of the results:

- Duration — how long the validation took to complete

- Records validated — the total number of records processed

- Valid records — the number of records that passed all checks and are ready to be imported

- Invalid records — the number of records that failed one or more checks

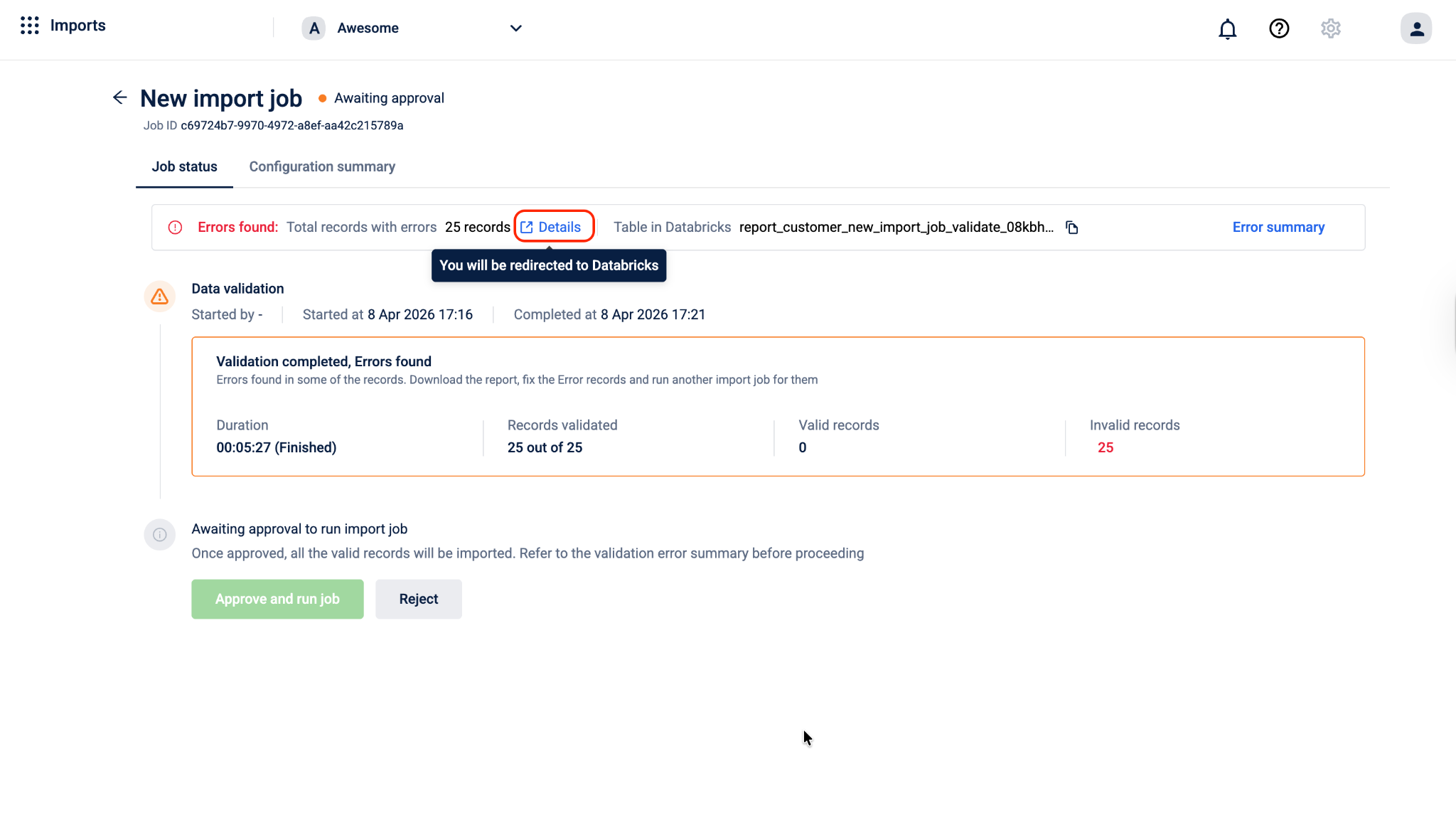

To check error details in Databricks, navigate to the Error section and select Details. You will be redirected to the Databricks page.

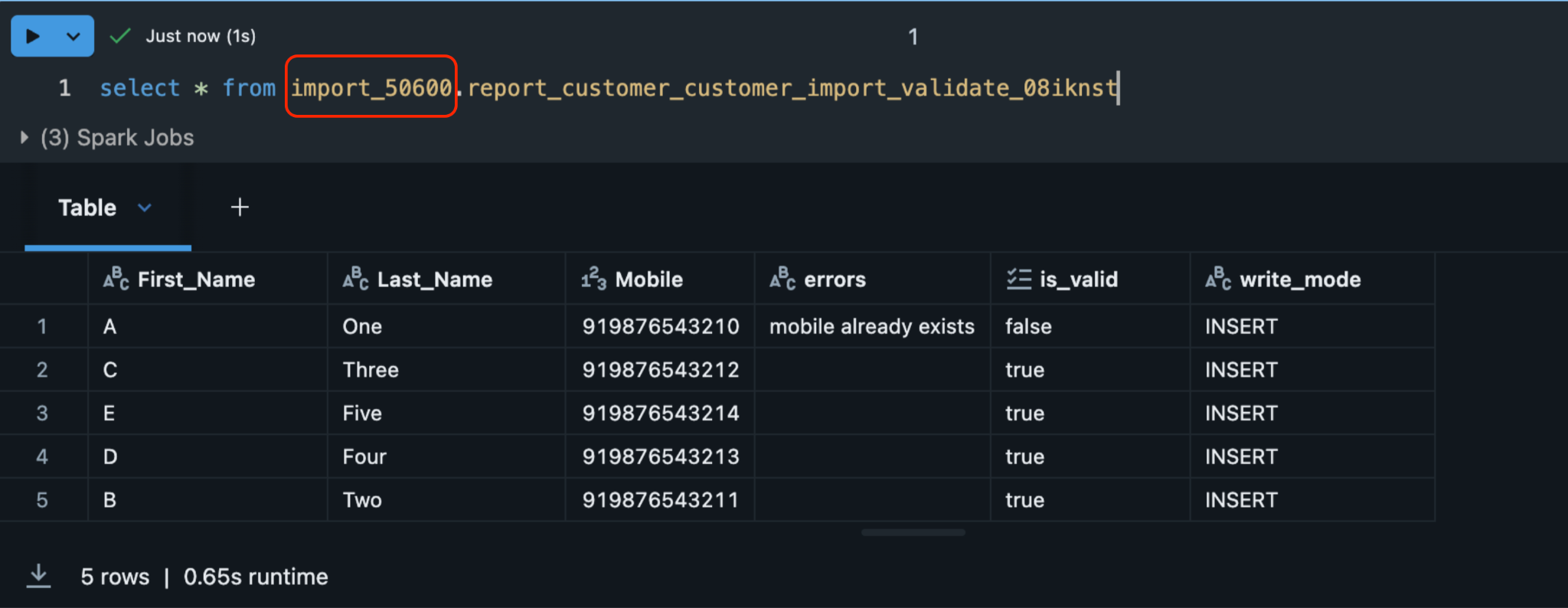

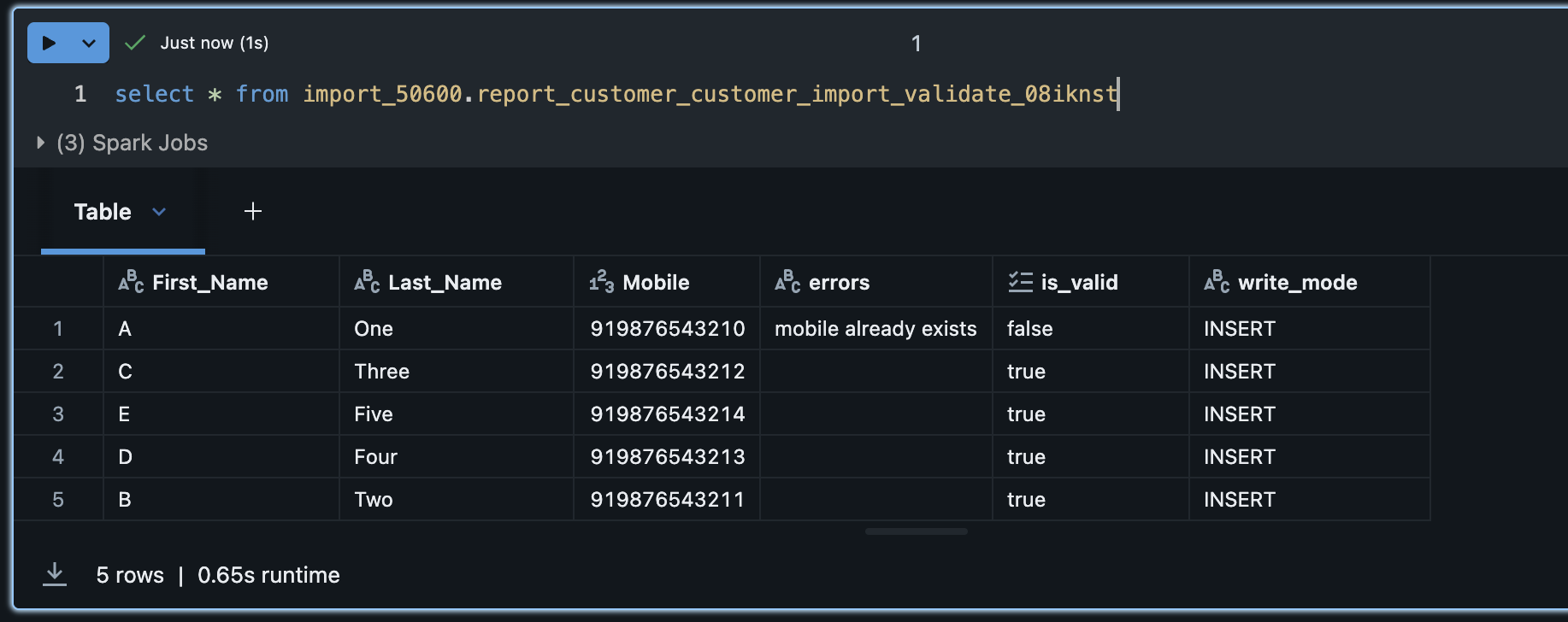

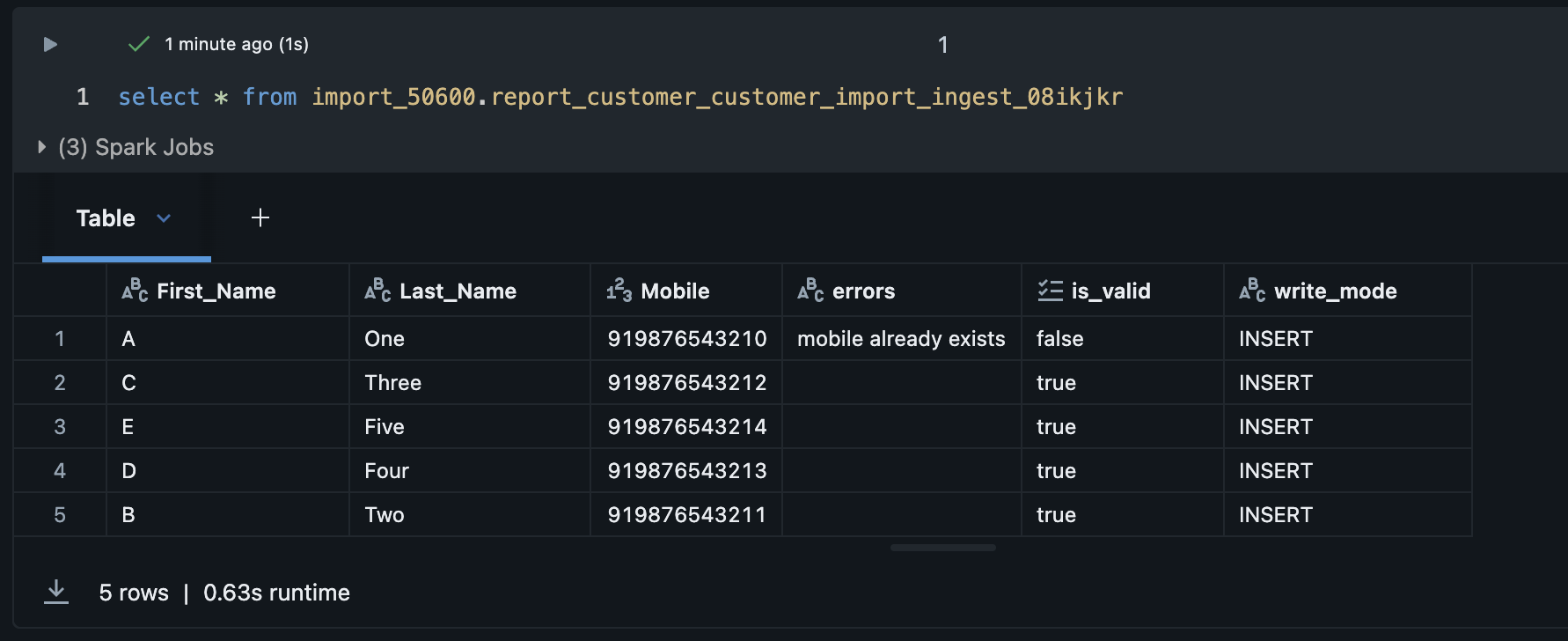

Validation Result Table in Databricks

After validation completes, the system automatically creates a result table in your organisation's Databricks workspace. This table contains every record from your source along with its validation status and, for records that failed, the specific error message logged against it.

The result table is created under the import_<orgId> database and follows this naming format:

import_{orgId}.report_{profileType}_{job_name}_validate_{suffix}For example, a customer import job named testing would create a table like:

import_1001.report_customer_testing_validate_08kar5z

What happens after validation

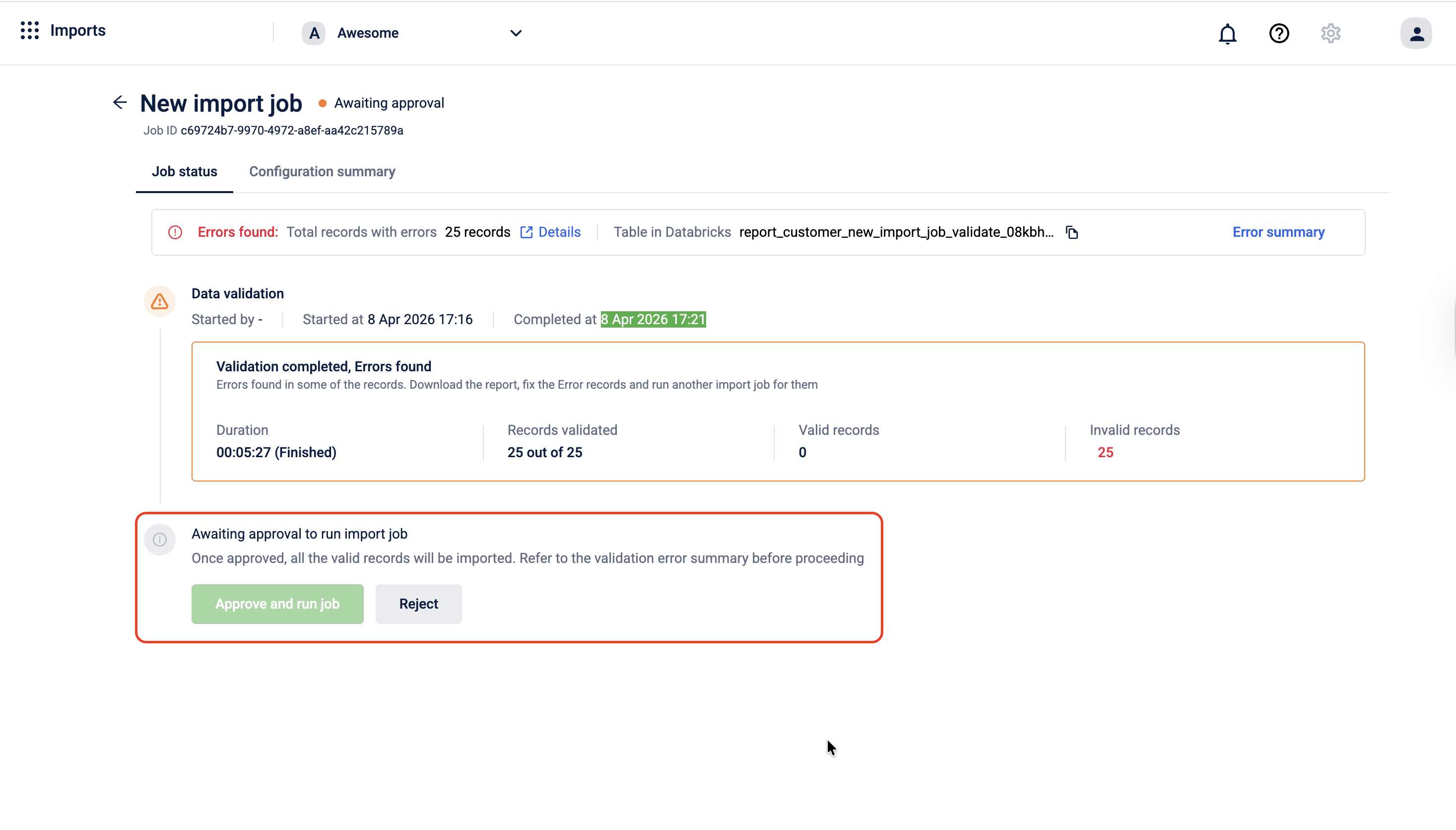

Once validation is complete, the job moves to an approval stage before the actual import runs.

On the job status page, you can do one of the following:

- If there are no invalid records, Select Approve and run job. The system will import all valid records into the Capillary Database. Any invalid records are skipped and not imported.

- If the validation results are not acceptable for example, too many records failed or the errors indicate a problem with the source data. Select Reject. The job is cancelled and no data is written. You can go back, fix the issues in your source table, and create a new import job.

Note: Selecting Approve and run job imports only the records that passed validation. Invalid records are never written to the database, regardless of approval.

What Happens After Approval

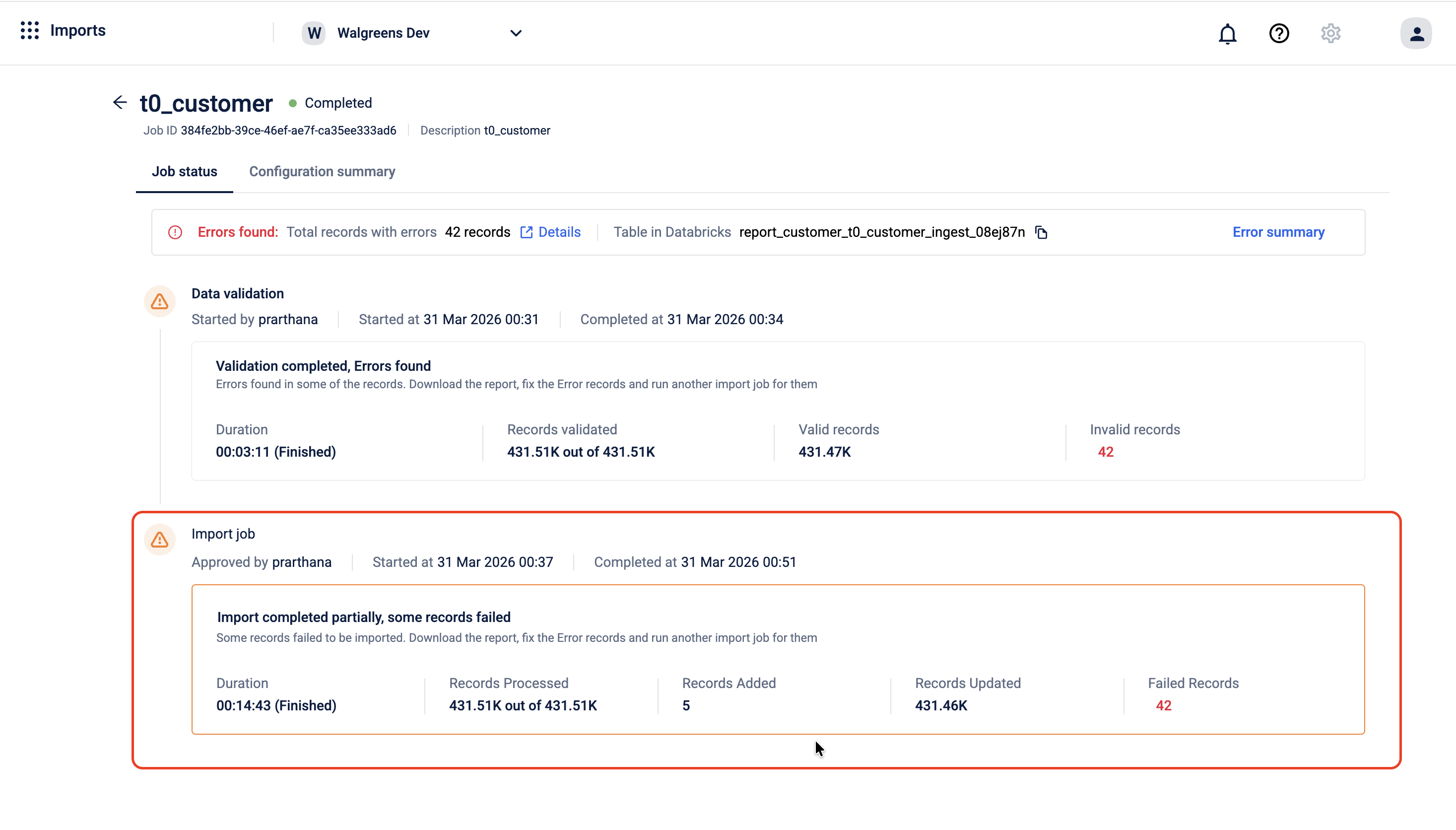

Once you approve the job, the system begins writing the valid records to the Capillary Database. The job status page updates in real time to reflect the progress of the import.

When the import is complete, the page shows a summary of the run:

- Duration - how long the import took to complete

- Records processed - the total number of records the system attempted to write

- Records added - the number of new records successfully created in the database

- Records updated - the number of existing records successfully updated in the database

- Failed records - the number of records that could not be written, shown in red

If some records failed during the import, the page shows the message Import completed partially, some records failed. This means the import ran but not all records were written to the database successfully. The records that were processed are already in the database. For the ones that failed, download the error report, fix the data in your source table, and run a new import job for those records.

Ingest Result Table in Databricks

After the import run completes, the system creates a second result table in Databricks — separate from the validation table. This ingest table captures the outcome of the actual write operation, including which records were written successfully and which failed at the database level.

The ingest table follows this naming format:

import_{orgId}.report_{profileType}_{job_name}_ingest_{suffix}For example:

import_1001.report_customer_testing_ingest_08kar5z

Together, the validate table and the ingest table give you a complete audit trail for every import job what was validated, what passed, and what was actually written to the database.

Databricks Tables Summary

| Table | When it is created | What it contains |

|---|---|---|

report_{profileType}_{job_name}_validate_{suffix} | After validation completes | Every record has its validation status and error messages for failed records |

report_{profileType}_{job_name}_ingest_{suffix} | After the import run completes | Each record along with its status and error details for failed entries. |

Troubleshooting

This section helps identify and resolve issues encountered during the import process. The following table lists common validation errors, their meaning, and the steps to fix them.

Records failed validation

After validation completes, the import job details page shows the total records processed, records successfully written, and records marked as invalid. If records failed, download the error report and use the table below to identify and fix the issue.

| Error message | What it means | How to fix |

|---|---|---|

At least one identifier required | The record has no mobile, email, or external ID. | Add at least one valid identifier to the record in your source table. |

mobile already exists | The mobile number is already registered to another customer. | Remove the duplicate or use the Update method to modify the existing customer. |

email already exists | The email is already registered to another customer. | Remove the duplicate or use the Update method to modify the existing customer. |

external_id already exists | The external ID is already in use by another customer. | Remove the duplicate or use the Update method to modify the existing customer. |

slab name is invalid | The slab name does not match any slab in the loyalty program. | Verify the slab name against your loyalty program configuration and correct the value in your source table. |

slab cannot be attached to non loyalty customer | A slab was provided for a customer whose loyalty type is Non-Loyalty. | Remove the slab value for non-loyalty customers, or correct the loyalty type in your source table. |

no slab change found | The slab name provided is the same as the customer's current slab. | No action needed if this is expected. Remove the record from the import if no change is required. |

status label is invalid | The status label is null or does not match any status configured for your organisation. | Check your organisation's configured status labels and correct the value in your source table. |

Deleted status cannot be changed | The customer's current status is Deleted. This status cannot be updated through import. | Remove this record from the import. Deleted customer status cannot be changed through the Data Import framework. |

Pending Deletion status cannot be changed | The customer's current status is Pending Deletion. This status cannot be updated through import. | Remove this record from the import. Pending Deletion status cannot be changed through the Data Import framework. |

no status change found | The status provided is the same as the customer's current status. | No action needed if this is expected. Remove the record from the import if no change is required. |

USER_INSERTION_FAILED | The record passed all validation checks but could not be written to the database. | See USER_INSERTION_FAILED below. |

Scenarios

Table not appearing in the dropdown

Symptom: A table you created in Databricks does not appear in the Select the table dropdown in Step 1.

What to check:

- Confirm the table exists in Databricks under the

import_<orgid>database. Tables in other databases or schemas are not listed. - Wait up to 10 minutes after table creation.

- Refresh the page and check again.

When to escalate: If the table does not appear after 10 minutes and you have confirmed it exists under the correct database, raise a ticket with the Capillary support team and provide the org ID and table name.

Upsert method not available

Symptom: The Upsert method is greyed out or not selectable in Step 2.

What to check:

- Upsert is disabled when Identifier Flexibility is enabled for your organisation. These two configurations are mutually exclusive.

- Check with your organisation administrator to confirm whether Identifier Flexibility is active.

When to escalate: If you need Upsert enabled and Identifier Flexibility disabled (or vice versa), raise a ticket with the Capillary support team. Provide the org ID and the required configuration.

[PLACEHOLDER — CSM + PSV]: Add the specific ticket type or team name to contact for Identifier Flexibility configuration changes.

USER_INSERTION_FAILED

Symptom: One or more records show USER_INSERTION_FAILED in the error report after validation completes.

What it means: The record passed all validation checks but could not be written to the database at the write stage.

What to check:

- Check whether the error affects a small number of isolated records or the majority of the batch. Isolated failures may indicate a data issue; bulk failures may indicate a system-level problem.

- Retry the import for the affected records as a separate job before escalating.

When to escalate: If the error persists after a retry, raise a ticket with the Capillary support team. Include the following information:

- Job ID

- Org ID

- Number of affected records

- Whether the error is isolated or affects the majority of the batch

Updated 2 months ago